PROJECT DIRECTIONS

PUBLICATIONS

[P22] Valerie Fanelle*, Sepideh Karimi*, Aditi Shah*, Bharath Subramanian* and Sauvik Das. Blind But Human: Explore More Usable Audio CAPTCHA Designs. (To appear) In Proceedings of the Sixteenth Symposium on Usable Privacy and Security (SOUPS), 2020. (*authors contributed equally)

[P21] Hue Watson, Eyitemi Moju Igebene, Akansha Kumari and Sauvik Das. “We Hold Each Other Accountable”: Unpacking How Social Groups Approach Cybersecurity and Privacy Together. In Proceedings of the 38th SIGCHI Conference on Human Factors in Computing Systems (CHI), 2020. Best Paper honorable mention (top 5% of submissions)

[P20] Sauvik Das, David Lu, Taehoon Lee, Joanne Lo and Jason Hong. The Memory Palace: Exploring Visual-Spatial Paths for Strong, Memorable, Infrequent Authentication. In Proceedings of the 32nd Annual ACM Symposium on User Interface Software and Technology (UIST), 2019.

[P19] Sauvik Das, Laura Dabbish and Jason Hong. A Typology of Perceived Triggers for End-User Security and Privacy Behaviors. In Proceedings of the 13th International Symposium on Usable Privacy and Security (SOUPS), 2019.

[P18] Sauvik Das, Joanne Lo, Laura Dabbish and Jason Hong. Breaking! A Typology of Security and Privacy News and How It's Shared. In Proceedings of the 36th SIGCHI Conference on Human Factors in Computing Systems (CHI), 2018.

[P17] Jason Wiese, Sauvik Das, John Zimmerman and Jason Hong. Evolving the Ecosystem of Personal Behavioral Data. HCI Journal Special Issue on The Examined Life: Personal Uses for Personal Data (2017).

[P16] Sauvik Das, Gierad Laput, Chris Harrison and Jason I. Hong. Thumprint: SociallyInclusive Local Group Authentication Through Shared Secret Knocks. In Proceedings of the 35th SIGCHI Conference on Human Factors in Computing Systems (CHI), 2017. Best Paper honorable mention (top 4% of submissions)

[P15] Sauvik Das. Social Cybersecurity: Understanding and Leveraging Social Influence to Increase Security Sensitivity. German Journal of it – Information Technology Special Issue on Usable Security and Privacy, 2016. Invited paper

[P14] Sauvik Das, Jason Wiese and Jason I. Hong. Epistenet: Facilitating Programmatic Access & Processing of Semantically Related Personal Mobile Data. In Proceedings of the 18th International Conference on Human-Computer Interaction with Mobile Devices and Services (MobileHCI), 2016. (Acceptance Rate: 23%).

[P13] Alexander de Luca, Sauvik Das, Iulia Ion, Martin Ortlieb and Ben Laurie. Expert and NonExpert Attitudes towards (Secure) Instant Messaging. In Proceedings of the 10th International Symposium on Usable Privacy and Security (SOUPS), 2016.

[P12] Haiyi Zhu, Sauvik Das, Yiqun Cao, Shuang Yu, Aniket Kittur and Robert Kraut. A Market in Your Social Network: The Effects of Extrinsic Rewards on Friendsourcing and Relationships. In Proceedings of the 34th SIGCHI Conference on Human Factors in Computing Systems (CHI), 2016. (Acceptance Rate: 23%) Best Paper honorable mention (top 4% of submissions)

[P11] Sauvik Das, Jason I. Hong and Stuart Schechter. Testing Computer-Aided Mnemonics and Feedback for Fast Memorization of High-Value Secrets. In Proceedings of the NDSS Workshop on Usable Security (USEC), 2016.

[P10] Sauvik Das, Alexander Zook, and Mark Riedl. Examining Game World Topology Personalization. In Proceedings of the 33rd SIGCHI Conference on Human Factors in Computing Systems (CHI), 2015. (Acceptance Rate: 23%)

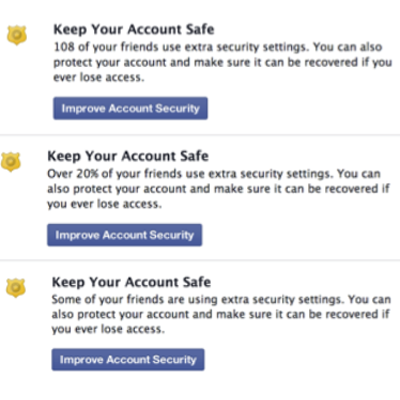

[P9] Sauvik Das, Adam Kramer, Laura Dabbish and Jason I. Hong. The Role of Social Influence in Security Feature Adoption. In Proceedings of the 18th ACM Conference on Computer Supported Cooperative Work (CSCW), 2015. (Acceptance Rate: 28.3%)

[P8] Sauvik Das, Adam Kramer, Laura Dabbish and Jason I. Hong. Increasing Security Sensitivity with Social Proof: A Large Scale Experimental Confirmation. In Proceedings of the 21st Conference on Computer and Communications Security (CCS), 2014. (Acceptance Rate: 19.5%). Honorable mention for NSA best scientific cybersecurity paper in 2014 (Top 3 out of 50 anonymous nominations)

[P7] Sauvik Das, Tiffany Hyun-Jin Kim, Laura Dabbish and Jason I. Hong. The Effect of Social Influence on Security Sensitivity. In Proceedings of the 8th International Symposium on Usable Privacy and Security (SOUPS), 2014. (Acceptance Rate: 26.5%)

[P6] Eiji Hayashi, Sauvik Das, Shahriyar Amini, Jason Hong and Ian Oakley. CASA: ContextAware Scalable Authentication. In Proceedings of the 7th International Symposium on Usable Privacy and Security (SOUPS), 2013. (Acceptance rate: 27%)

[P5] Sauvik Das, Eiji Hayashi, and Jason Hong. Exploring Capturable Everyday Memory for Autobiographical Authentication. In Proceedings of the 2013 ACM International Joint Conference on Pervasive and Ubiquitous Computing (UbiComp), 2013. (Acceptance rate: 23%). Best Paper Award (top 1% of all submissions)

[P4] Sauvik Das and Adam Kramer. Self-Censorship on Facebook. In Proceedings of the 7th International AAAI Conference on Weblogs and Social Media (ICWSM), 2013. (Acceptance rate: 20%)

[P3] Manya Sleeper, Rebecca Balebako, Sauvik Das, Amber McConohy, Jason Wiese, and Lorrie Cranor. The Post That Wasn’t: Examining Self-Censorship on Facebook. In Proceedings of the 16th annual ACM Conference on Computer Supported Cooperative Work and Social Computing (CSCW), 2013. (Acceptance Rate: 35.6%)

[P2] Emmanuel Owusu, Jun Han, Sauvik Das and Adrian Perrig. ACCessory: Keystroke Inference using Accelerometers on Smartphones. In Proceedings of the 12th annual ACM/SIG International Workshop on Mobile Computing Systems and Applications (HotMobile), 2012. (Acceptance rate: 20.6%)

[P1] Ken Hartsook, Alexander Zook, Sauvik Das, and Mark Riedl. Toward supporting storytellers with procedurally generated game worlds. In Proceedings of the 2011 IEEE Conference on Computational Intelligence in Games (CIG), 2011.